Taking the Queen and Losing the Game

A lot of drug discovery ML still treats the problem as if the goal is to identify the best molecule. Score a set of compounds, rank them, take the one at the top, repeat.

That framing is clean. It is also wrong.

In a real small-molecule project, you are not optimising a static list of molecules. You are optimising a sequence of decisions under uncertainty. The unit of optimisation is not the molecule. It is the project.

A chess engine would never think this way

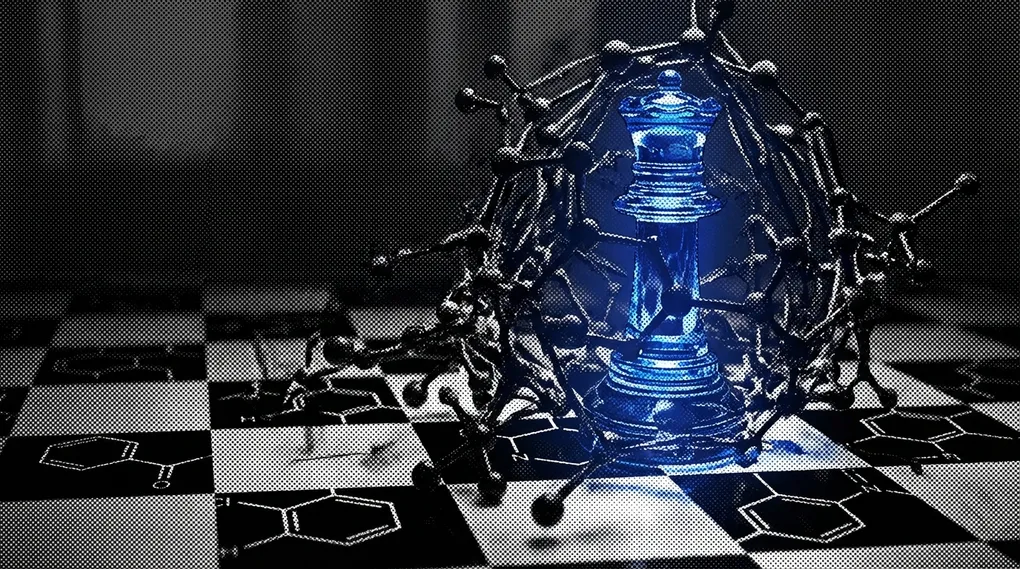

Imagine a chess engine that evaluates a position by looking for the most valuable piece it can take on the next move.

Capturing a queen is good. Except sometimes taking the queen loses the game. Sometimes the queen is poisoned. Sometimes grabbing material opens a mating net. Sometimes the right move is quiet, defensive, or positional.

Any serious chess player understands this. The game is not won by greedily taking the highest-value object on the board. It is won by making moves that improve the whole future position.

Drug discovery is the same. The highest-scoring molecule is often the equivalent of taking the queen and losing three moves later.

The best-looking compound is often the wrong project move

Suppose a model proposes a compound with the highest predicted potency. What is that proposal actually asking the project to do?

It may be asking for a long bespoke synthesis, a new intermediate, a low-confidence route, purification pain, a week or two of chemistry effort, delayed assay turnaround. It may be a molecule that teaches very little if it fails — a result that does not clarify the key uncertainty in the series.

That is not one molecular suggestion. That is a project move. And like in chess, the value of the move depends on what it does to the whole position.

A slightly less exciting analogue that is fast to make, probes an uncertainty cleanly, and sharpens the next round of design may be far more valuable than the supposedly best compound on paper.

This is where molecular ML goes wrong. It scores molecules one by one, while projects live or die on path dependence.

Discovery is a sequential game

Most real programmes are not asking: Which molecule is best in absolute terms?

They are asking: What should we do next, given what we know, what we do not know, and what it will cost to learn more?

The next molecule should not just have a high expected score. It should improve the future state of the project. That can mean tightening local SAR, separating competing hypotheses, testing whether a liability is intrinsic or fixable, checking whether a vector is truly open, or generating an easy synthesis that gets back on the assay queue quickly.

These are project questions, not molecule questions. A model that ignores them may still look impressive in a benchmark. It will often be mediocre in practice.

The greedy move is often the losing move

A lot of models behave as though choosing the next compound is a greedy maximisation problem. Pick the highest predicted pIC50, the best MPO score, the best multi-objective aggregate.

But greedy play is exactly what good chess players learn not to do. The superficially best move can worsen the deeper position.

In discovery, the top-scoring compound may consume too much synthesis time, fail to resolve the key uncertainty, sit outside the practical chemistry bandwidth of the team, generate data that do not transfer well to the next round, or cause the project to overcommit prematurely in the wrong direction.

The best next molecule is often not the highest-scoring one. It is the move that best improves the state of the programme.

The project has state — most benchmarks pretend it does not

Benchmarks are often set up as if each prediction is independent. Here is a molecule — predict its property. Here is another — rank its activity.

But real projects are stateful. They contain history, momentum, local SAR, failed motifs, route knowledge, assay bottlenecks, known liabilities, untested hypotheses, and internal beliefs about what is likely to work.

That state changes the value of every new compound. A para-fluoro analogue may be extremely valuable in one moment and almost worthless in another, depending on what the team already knows. A scaffold hop may be brilliant at one stage and idiotic at another.

There is no molecule value independent of project context. There is only move value given position.

The best move in discovery is often the one that produces the most useful information per unit time.

Sometimes you sacrifice material to gain initiative, open lines, or expose the king. The move is good not because of what it wins immediately, but because of what it reveals and enables.

In medicinal chemistry, an easy-to-make analogue can do the same thing. A compound might be worth making because it tells you whether a potency trend is real, whether a liability is vector-specific, whether a binding hypothesis survives a subtle perturbation, or whether an apparently strong direction is actually a false dawn.

Those are valuable outcomes even when the molecule itself is not destined to become the development candidate. A benchmark that only rewards absolute predictive accuracy misses this entirely.

Simple local models win because the task is sequential

People sometimes find it disappointing that simple local models do so well in live project settings.

They should not. That is exactly what you would expect if the true task is local sequential decision-making. The point is not to demonstrate universal chemical intelligence. The point is to improve the next few project moves.

A humble project-level SAR model, trained on the series at hand, can be more useful than a more sophisticated global model — not because it is more elegant, but because it understands the current board position better.

The wrong optimisation target creates the wrong behaviour

If you train and benchmark models as though the unit of value is the individual molecule, you get models that over-prioritise extreme point estimates, underweight tractability, ignore information value, treat all predictions as equally actionable, and optimise for static rankings rather than dynamic decisions.

This is one reason impressive models feel oddly unhelpful when deployed. They answer: Which molecule looks best? The project needs: What should we do next?

The queen trap appears everywhere

Once you start looking, you see this pattern constantly.

A team reaches for the highest predicted potency gain, but the chemistry is so awkward that two weeks disappear and the project learns almost nothing. A model suggests a scaffold hop before local vectors have been properly explored. A series is abandoned because the top few ambitious designs fail, even though faster, simpler experiments would have clarified the path forward. An ADME fix is pursued because it looks large on paper, but it muddies the SAR and teaches less than a smaller, cleaner perturbation.

These are all versions of taking the queen and losing the game. The move looks strong in isolation. It looks bad as part of a sequence.

The benchmark should reflect the real game

If the true unit of optimisation is the project, benchmarks should stop pretending the job is static molecule scoring. They should ask whether the model improves the trajectory of a live programme — sequential design quality, top-k choices under chemistry constraints, speed of local learning, decision quality after each assay round, compounds avoided, time saved, information gained per synthesis cycle.

Those are much harder benchmarks. They are also much closer to the actual game being played.

The right question is not: What is the best molecule?

It is: What is the best next move for the project?

Until models and benchmarks are built around that question, drug discovery AI will keep making the same mistake: confusing a good-looking move with a good position.